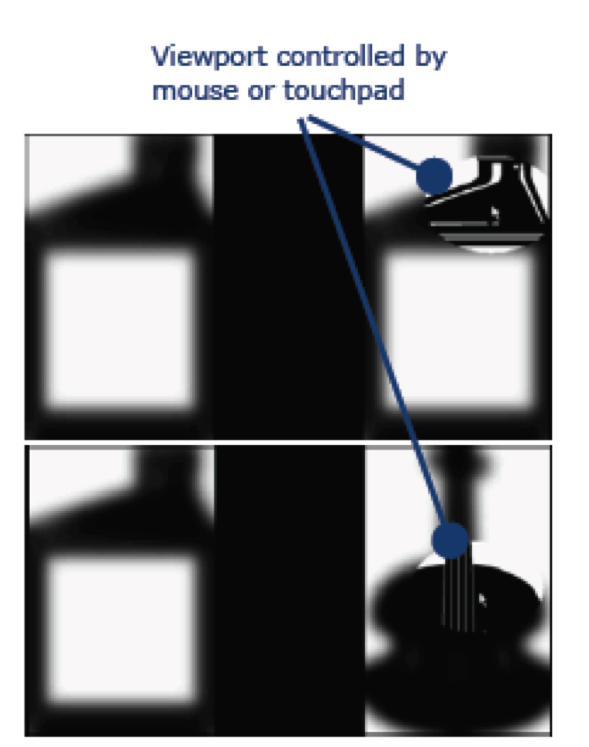

The VPC-W task is similar to the eye tracking-based VPC, but the image viewing interface is specially modified to elicit and capture the subject’s image examination behavior (Right figure).

Specifically, two main techniques are used: (1) blurring of

the stimulus images and (2) an oval shaped ViewPort or

oculus that that tracks the cursor position. As in the VPC

task, a subject performing the VPC-W task is shown two

images, side by side, but the images are blurred so that

one may only recognize the general image outline and not

the details. A subject can direct the location of the

Viewport (area of focus) by using a computer mouse or

touchpad. The Viewport reveals the image details by

showing the corresponding part of the original, un-blurred

image inside the oculus. As illustrated in Figure 2, the

viewport is currently positioned over the right image of

the familiarization and test phase.